People and computers speak different languages – the former are using words and sentences, while the latter are more into ones and zeros. As we know, this gap in the communication is filled with a mediator, which knows how to translate all the information flowing between the two parts. These mediators are called graphical user interfaces (GUIs).

Finding an appropriate GUI can be quite a challenge – and it’s basically the key factor in determining whether your software would be used. If users don’t understand the interactions they need to do in order to get the most out of it, they will not use it. That’s why the GUIs must be intuitive and easy to learn.

Historically, there are three major breakthroughs in the quest of finding the most suitable user interfaces.

The first one was in Xerox’s research lab, where Steve Jobs recognized the huge potential of the mouse cursor clicking around a desktop, opening folders, copying and pasting files and much more. It was a revolutionary approach, which made computers accessible to a much broader audience and it’s still used today.

The second one was also something Steve Jobs managed to introduce on a massive scale: “We’re gonna use the best pointing device in the world. We’re gonna use a pointing device that we’re all born with – we’re born with ten of them. We’re gonna use our fingers.” These Steve Jobs words on iPhone’s unveiling introduced the multi-touch concept which is now widely used on all mobile devices. It was another natural, but revolutionary step in providing the most intuitive experience for the users.

These two approaches made interaction with machines (whether that be computers or mobile devices) much easier and accessible. But one thing in common of these GUIs is that people need to learn how to interact with them. For example, they need to know that single click on a folder will select that folder and double click will open it. They need to know that there’s a ‘back’ hardware button on the Android phones, but a similar software button (styled differently) in every iOS application. Having many different options and different implementations of the two concepts can be confusing for users. Everyone who switched from a Windows to a Mac (or vice versa) knows that you need few days (or even weeks) to learn how to efficiently use the different operating system. The same applies to the phones – although they all follow the multi-touch concept, the transition from one OS to another can take some time.

Another challenge that current GUIs face is the new set of devices introduced to the market – wearables. When you have a screen as small as a watch, clicking on it to perform some task can be quite a tedious experience. And these devices are introduced mostly for the modern, always rushing users, that need some information fast, with minimal fuss.

This brings the need for a completely different user interface – one which will unify all the different platforms and will perform tasks for users with minimal interaction. And what’s the most natural way of expressing your needs? Of course, by using your voice to create words and sentences.

So the third major breakthrough in user interfaces will be (or maybe already is) conversational interfaces. The idea is not new – we’ve seen it in a lot of movies, usually in the form of some virtual assistant that informs the main character of some new danger ahead. Generally speaking, movies can be an interesting source of inspiration for the next innovations in technology. We know how the interactive newspapers in Harry Potter inspired Facebook to introduce self-starting videos in their news feed. Or how the gesture driven UI in Minority Report is what now we basically do in interacting with our mobile devices. Keep that in mind, inspiration can come from unexpected places.

Since the idea about the conversational interfaces is not that new, why did it took so long big tech companies to start making products with it? The main reason is that understanding sentences is not a trivial task. Sometimes even one small word (like ‘not’ for example) can completely change the meaning of a sentence. Also, interpunction can introduce different meanings to words. And computers are not like humans, they don’t talk with each other in free form manner all the time, learning new phrases and meanings along the way. They are pretty exact entities, they do what they are told to do.

However, artificial intelligence, machine learning and natural language processing are making some impressive improvements in the past few years, making it easier for computers to figure out what users are saying. That’s why we see new technologies from every major tech company in this area.

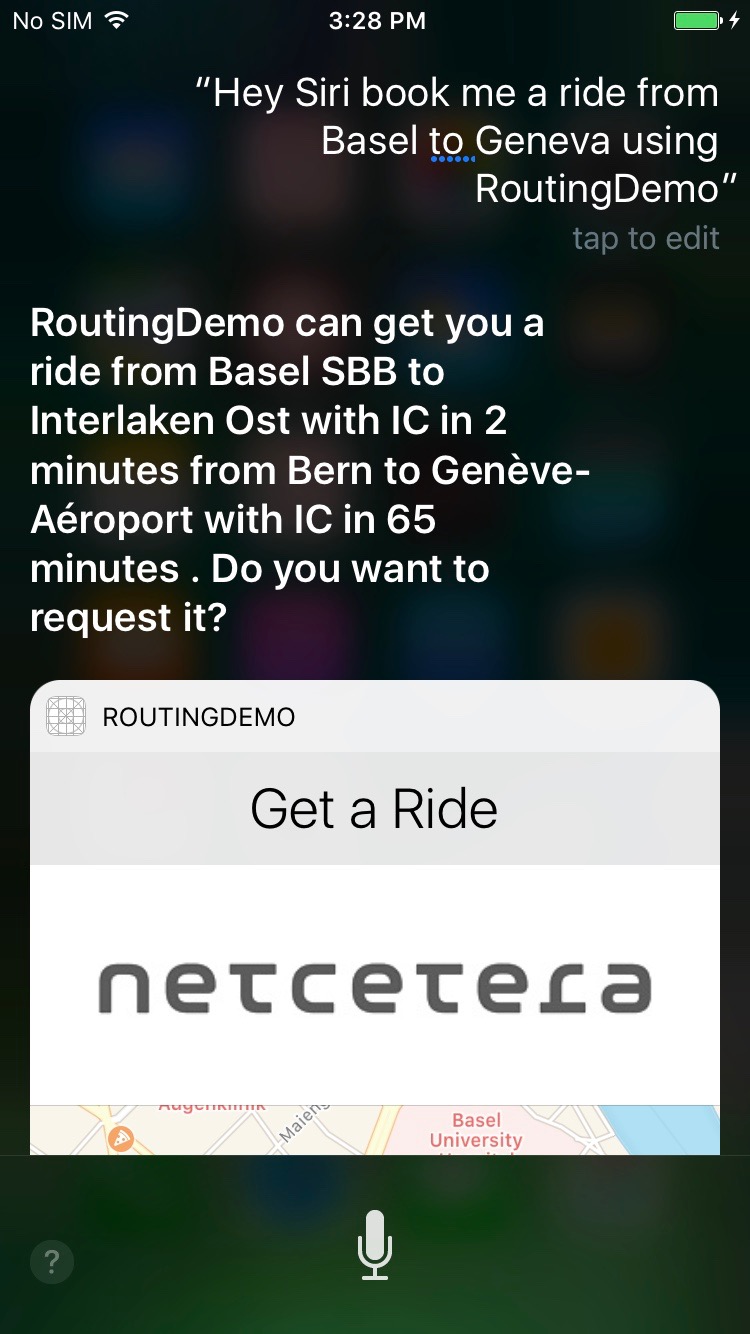

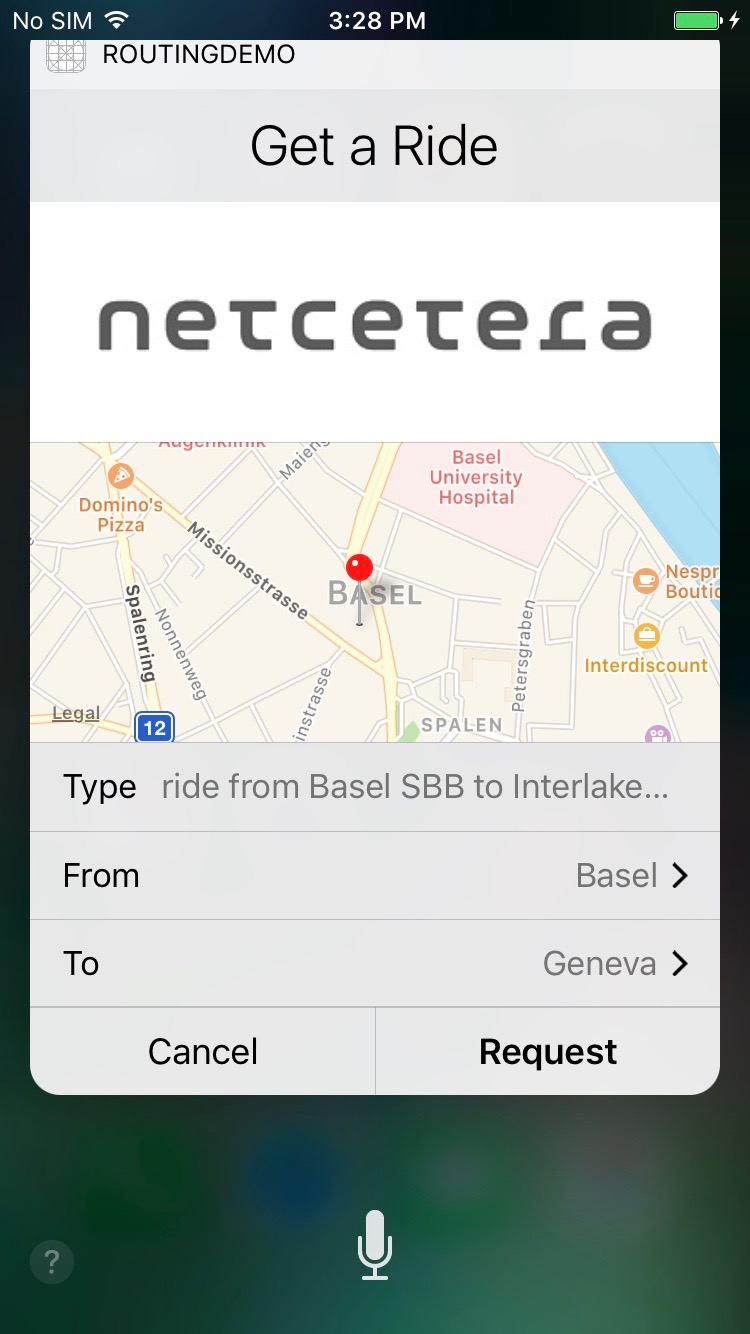

Apple has Siri, which is now also opened for iOS developers through the SiriKit framework. Developers can now handle voice commands in their apps that users give to Siri. For example, imagine a user rushing to find a ride to the airport. They will say something like ‘Hey Siri, book me a ride to the airport using YourCoolApp’. Siri will ask your cool app whether it can handle the request – the app might ask for some additional information, like where do you want to be picked up (or maybe just use user’s current location) or what type of ride do you want (car, taxi, train etc.). Then Siri presents the information from your app, inside Siri (your app is not opened, everything happens in Siri’s context) and if the user agrees to the provided ride offer, your app then reserves the ride and Siri notifies the user about the status. You can see more details about such prototype I was working on here.

Google has OK Google and Google Assistant, which support similar functionalities and extension points for developers. One interesting new product from Google is api.ai, which is a web application through which developers can train the platform to learn how to recognize and extract parts of the sentences and return them in a JSON format. This gives developers more flexibility and releases the burden from them in terms of developing complex NLP solutions by themselves. Check how this platform works in this youtube video.

Similar product to api.ai is Microsoft’s LUIS. It’s also a web application through which you can train how the platform recognizes entities in the sentence. Apart from that, Microsoft also has Cortana, their virtual assistant named after a character in the video game Halo. Amazon has Alexa, their virtual assistant that sometimes can (like any other virtual assistant) completely misunderstand you.

These comical situations are not rare, since there’s still a lot of room for improvement in this area. Users have to be as concise as possible and have to find the right structure of sentences that the virtual assistants will understand. In order to address this broad range of different sentences, the frameworks are offering a bit restrictive flow – they have few predefined domains (use-cases), which are triggered by already defined sentences. They encourage you to avoid open questions and to provide users with different options. For example, if you want a train ride from London to Paris, Siri can ask you “What type of ride do you want” and your app can provide few options that Siri will display to the user, like First Class or Second Class ticket. Also, the SDKs are designed in a way that you need to find the user’s preferences by asking one question at a time and proceed with the next question only after you have the answer of the current one. For example, after you know the type of the ride, Siri might next ask you ‘Where do you want to go?’, if you haven’t specified that in the voice command. Notice also that the questions are pretty simple and clear and they ask for only one piece of information.

This new way of interacting with users is a new challenge for developers and user experience experts. There are still no common best practices, we still need to experiment and figure out what works and what doesn’t. In any case, the future is exciting and we will see a lot more improvements and innovation in this area.

Great article highlighting the most important aspects of the conversational interfaces. For me it’s interesting that there have been attempts to create these kind of software solutions for a very long time, yet people usually abandoned using them after a few tries because they made a lot of mistakes. Nowadays the technology is a lot more advanced, yet there are still some hilarious misunderstandings. Anyway, I’m certain that it will find it’s way in the near future so I’d recommended to the software developers to start working using it because most users will.

LikeLiked by 1 person